ChatGPT Health Is Dancing Around HIPAA. Well Played, Sam!

ChatGPT Health might be a marketing gimmick. But it also looks like a preview of how tech behemoths could undercut the entire digital health market.

Welcome to AI Health Uncut, a brutally honest newsletter on AI, innovation, and the state of the healthcare market. If you’d like to sign up to receive issues over email, you can do so here.

My readers know that I’ve been preaching AI commoditization, and saying it’s a matter of time before tech giants come in and take over entire sectors of healthcare. Right now, the margins are still super thin for them. So they don’t really care. But they are testing the waters.

ChatGPT Health, which OpenAI announced yesterday (January 7, 2026), may be a step in that direction. ChatGPT Health is basically a ChatGPT wrapper on a dedicated server, with claims of “enhanced security” and a promise that no OpenAI model will be trained on patient data.

OMG. So many questions.

But first, I want to take a moment to thank all of my supporters. I’m often very critical of certain things happening in AI and healthcare. But I promise you, it’s only because I genuinely care and want to make things better for all of us. So, thank you for your understanding.

I receive a lot of messages daily, and I always respond promptly to every one of them. Most are incredibly kind and supportive.

I want to highlight one recent message in particular. A student from Ukraine reached out recently asking for full, paywall-free access to my Substack. Of course, I granted it—as I always do.

This brings me to another important message. If you cannot afford this article—perhaps you’re a student or currently between jobs—please reach out. That’s precisely why I created the AI Health Uncut Founding Member Club, currently at 19 and counting. Thanks to generous donations and support from these wonderful individuals, I’m able to provide access to anyone who needs it. By the way, students reach out frequently, and I’m always glad to help.

If you’d like to become a Founding Member of the AI Health Uncut community, you can join through this link. You’ll be making a real impact, helping me continue to challenge the system and push for better outcomes in healthcare through AI, technology, policy, and beyond.

OK. Back to ChatGPT Health…

So many questions following the ChatGPT Health announcement:

“Enhanced security.” Soooo, what kind of security does everyday ChatGPT have then? “Less than enhanced?” What does that even mean?

You promise not to train your models. But can you still fine-tune your models?

23andMe also promised a lot of things about the security of customer DNA data. And then one day they sent an unassuming email titled “Change to Terms & Conditions” that, of course, no one ever opens. And voilà, they can sell whatever they want to third parties (as we found out the hard way during the 23andMe bankruptcy filing last year).

I predict we’re going to forget about ChatGPT Health in a few weeks, just like we quickly forgot other OpenAI awkward crusades into healthcare:

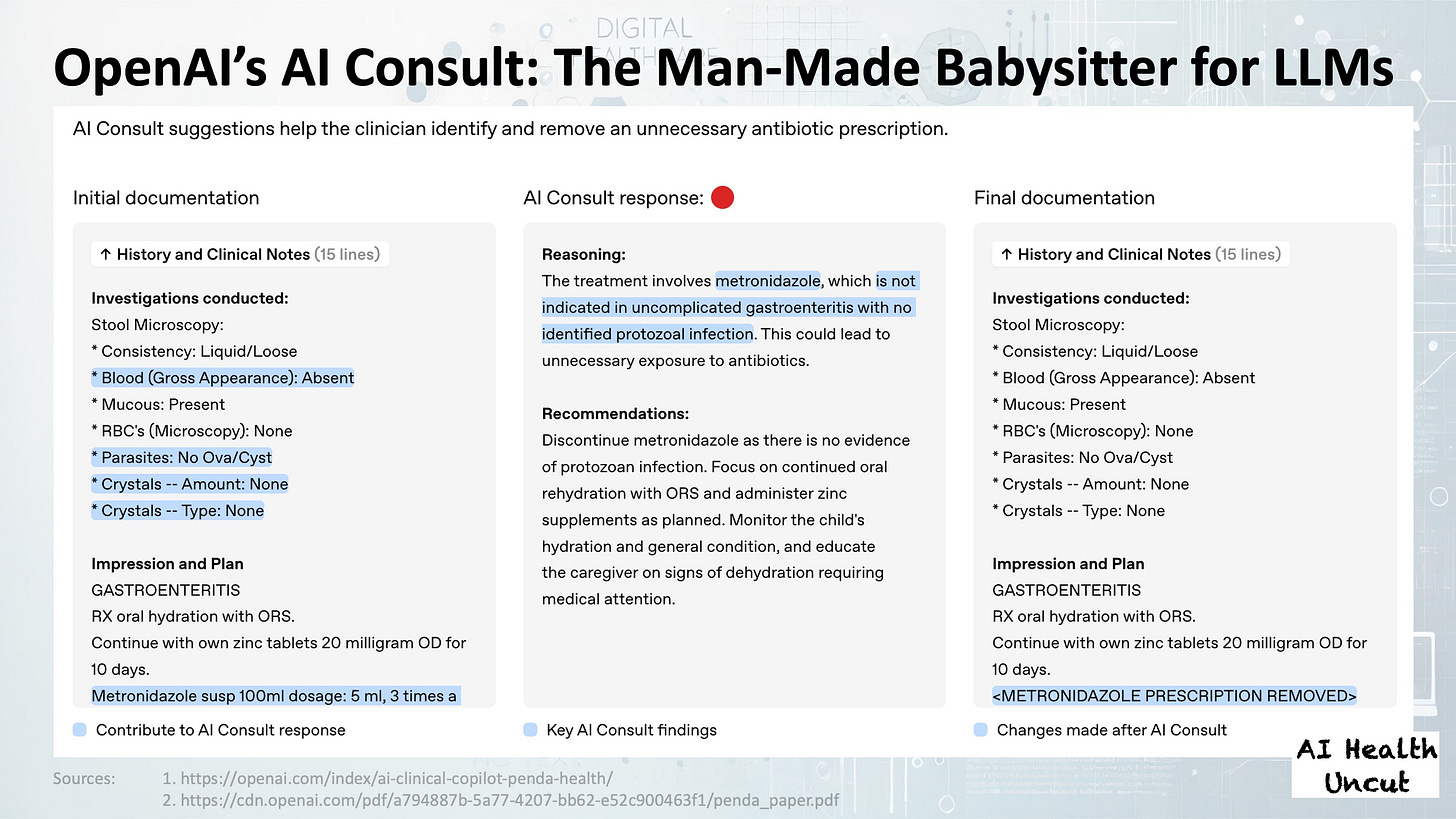

Remember OpenAI’s AI clinical copilot (aka AI Consult)? The thing that turned out to be basically a human curator for ChatGPT? 🙂

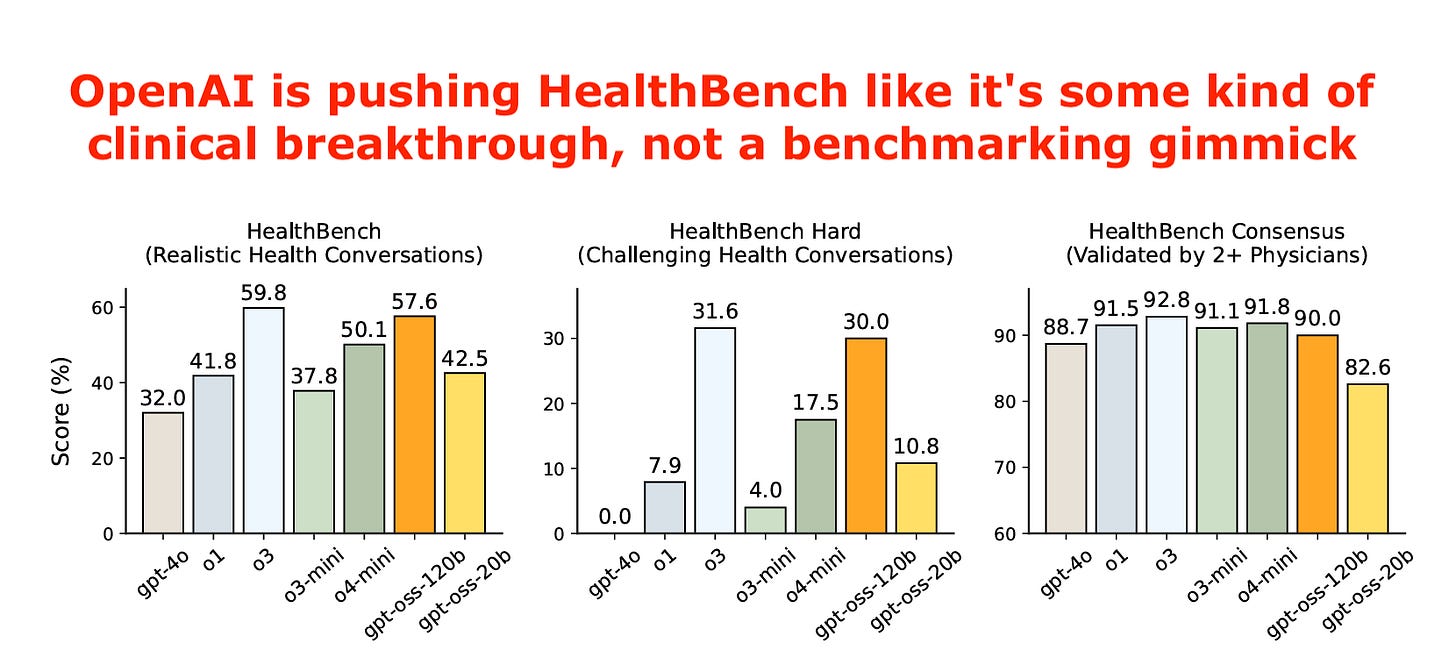

Remember OpenAI’s HealthBench? A carefully designed and curated dataset built on just 5,000 medical conversations. Not the model. The data. Just prompting, and of course the claim that OpenAI’s models did so well on their own benchmark. Who would have guessed. 🙂

Remember how OpenAI shamefully used a cancer survivor to promote the health-related features of GPT-5, only to cut those features back less than three months later after numerous suicide-related lawsuits?

Of course, we don’t remember any of these. And honestly, in the case of GPT-5, I’m pretty sure OpenAI wants you to forget everything. Because they are marketing gimmicks, designed to convince the medical community that it’s OK to use OpenAI’s gadgets. No new technology was introduced. All of these gimmicks were basically wrappers on ChatGPT.

And I think the story will continue with ChatGPT Health.

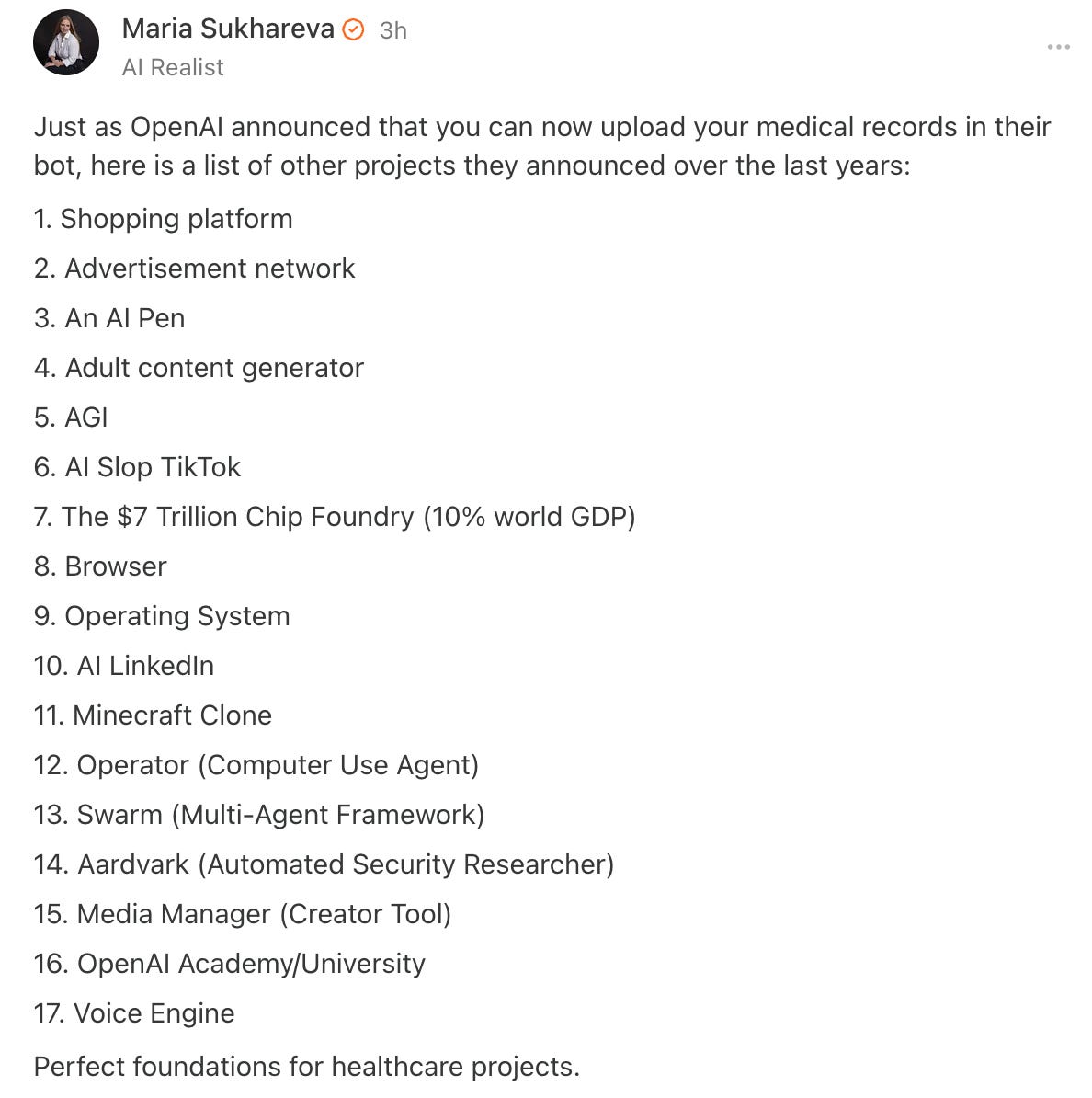

And, courtesy of my colleague Maria Sukhareva, here is a list of OpenAI marketing gimmicks outside of healthcare that no one remembers: 🙂

TL;DR:

1. This OpenAI Announcement Ain’t About ChatGPT Health. It’s About Something Bigger.

2. OpenAI Promises No Training on Patient Data. But What About Fine-Tuning?

3. Wait. ChatGPT Health Still Hallucinates, Right?

4. Why OpenAI’s Lawyers Say ChatGPT Health Is HIPAA-Compliant. And Why Sam Altman Might Not Give a Damn.

5. Lessons from 23andMe and the Ford Pinto: The Only Rule for Patient Data Is Profit.

6. Takeaway: ChatGPT Health Is OpenAI Testing the Healthcare Waters. It Probably Won’t Change Patient Behavior.

1. This OpenAI Announcement Ain’t About ChatGPT Health. It’s About Something Bigger.

I suspect the whole ChatGPT Health hype is serving a different purpose for OpenAI: